The global artificial intelligence boom has transformed NVIDIA from a graphics hardware company into the central infrastructure provider of the AI economy. As demand for AI systems accelerates in 2026, NVIDIA’s dominance in the AI chip market remains largely unmatched, driven by its leadership in GPUs, deep integration with cloud providers, and the explosive growth of AI data centers worldwide.

- The Foundation of AI: Why GPUs Still Matter

- Explosive Demand Driven by AI Data Centers

- The AWS Mega-Deal: A Turning Point for AI Infrastructure

- Vertical Integration and Ecosystem Control

- Competition Is Growing—But Still Behind

- The Compute Bottleneck: AI’s Biggest Constraint

- Strategic Investments and Expansion

- Economic Impact: A Multi-Trillion-Dollar Opportunity

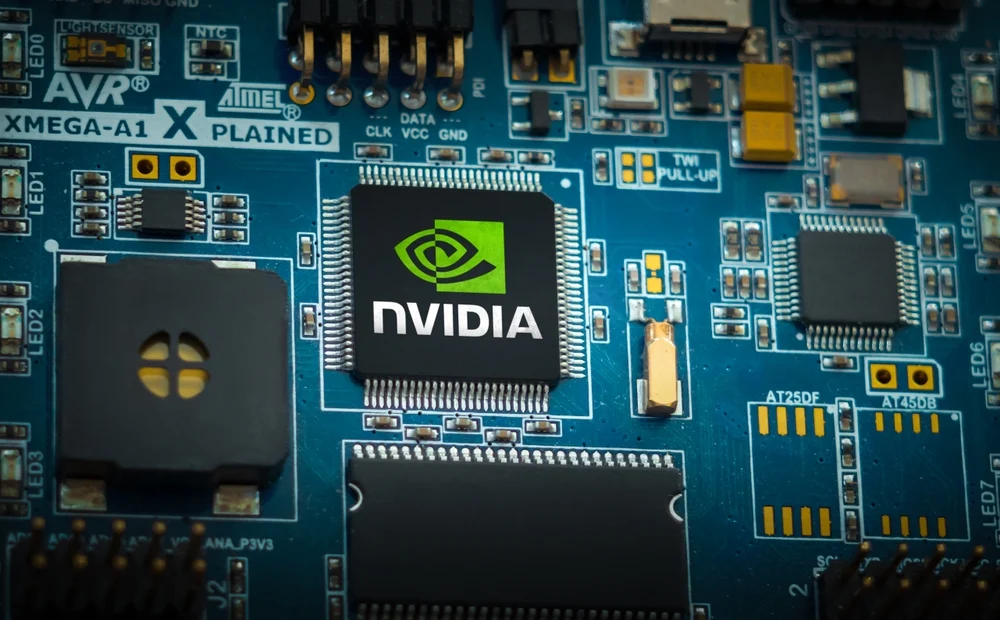

The Foundation of AI: Why GPUs Still Matter

At the core of modern AI systems are graphics processing units (GPUs)—chips designed for parallel computation. These are essential for training and running large AI models, including generative AI and autonomous systems.

NVIDIA has built its dominance on this architecture. By 2025, the company controlled roughly 90%+ of the data center GPU market, making it the default supplier for AI infrastructure globally.

Unlike CPUs, GPUs can process thousands of operations simultaneously, making them ideal for:

- Training large language models (LLMs)

- Running AI inference at scale

- Powering real-time AI applications

This technological advantage has positioned NVIDIA as the backbone of the AI revolution.

Explosive Demand Driven by AI Data Centers

The surge in AI adoption has led to an unprecedented expansion of AI data centers—specialized facilities designed to handle massive computational workloads.

- Global spending on AI data centers is projected to reach $650 billion in 2026

- These facilities rely heavily on GPUs, often consuming 2–4× more power per chip than traditional processors

As companies race to build AI infrastructure, demand for NVIDIA chips has surged beyond supply.

This demand is so intense that it has triggered:

- A global shortage of high-bandwidth memory (HBM)

- Increased chip prices

- Long waiting times for GPU allocation

In practical terms, access to NVIDIA GPUs has become one of the biggest bottlenecks in AI development.

The AWS Mega-Deal: A Turning Point for AI Infrastructure

One of the clearest signals of NVIDIA’s dominance is its massive partnership with Amazon Web Services (AWS).

- NVIDIA will supply over 1 million GPUs to AWS by 2027

- These include next-generation architectures like Blackwell and Rubin

- The deal represents one of the largest AI infrastructure agreements ever

This partnership highlights a critical reality:

Hyperscalers (Amazon, Microsoft, Google) are scaling AI—and NVIDIA powers them.

Cloud providers depend on NVIDIA to meet growing enterprise demand, reinforcing its position as the default hardware layer of AI.

Vertical Integration and Ecosystem Control

NVIDIA’s dominance is not just about hardware—it’s about ecosystem control.

The company has built a vertically integrated AI stack that includes:

- GPUs (H100, Blackwell, Rubin)

- Networking hardware (Spectrum, NVLink)

- Software platforms (CUDA, AI frameworks)

- Cloud and enterprise partnerships

This creates a powerful lock-in effect:

- Developers optimize for NVIDIA’s CUDA ecosystem

- Companies build infrastructure around NVIDIA chips

- Switching costs become extremely high

As a result, competitors face not just a hardware challenge—but an ecosystem barrier.

Competition Is Growing—But Still Behind

Despite NVIDIA’s dominance, competitors are increasingly investing in alternatives.

Key challengers include:

- AMD (AI accelerators)

- Google (TPUs)

- Amazon (Trainium / Inferentia chips)

- Arm Holdings (new AI CPU initiatives)

Recent developments show that even major AI companies are exploring alternatives due to limited GPU supply and high costs.

However, these alternatives face challenges:

- Smaller software ecosystems

- Lower performance in certain workloads

- Limited availability at scale

Even as competition grows, NVIDIA remains the industry standard.

The Compute Bottleneck: AI’s Biggest Constraint

One of the most important trends in 2026 is that compute availability—not ideas—is the main limitation in AI.

- AI companies struggle to secure enough GPUs

- Infrastructure expansion is capital-intensive

- Training large models requires enormous energy and hardware

This has led to a new reality:

The companies that control compute control the future of AI.

NVIDIA sits at the center of this dynamic.

Strategic Investments and Expansion

NVIDIA is also expanding beyond chip manufacturing:

- Investing billions into AI cloud providers like CoreWeave

- Acquiring AI companies and talent

- Developing full AI platforms (hardware + software + services)

Additionally, the company continues to innovate with new architectures such as:

- Blackwell GPUs for next-generation AI workloads

- Rubin platform, announced in 2026, targeting even larger-scale AI systems

These developments reinforce NVIDIA’s long-term strategy: own the full AI compute stack.

Economic Impact: A Multi-Trillion-Dollar Opportunity

NVIDIA’s rise reflects the scale of the AI market:

- The company surpassed $4–5 trillion in market valuation during the AI boom

- AI infrastructure is becoming a core global industry

- Governments and corporations are investing heavily in compute capacity

This positions NVIDIA not just as a tech company—but as a critical supplier in the global digital economy.

NVIDIA’s dominance in the AI chip market is not accidental—it is the result of years of investment in GPU architecture, software ecosystems, and strategic partnerships.

In 2026, as AI demand continues to surge, NVIDIA remains:

- The primary supplier of AI compute

- A central partner to cloud providers

- A gatekeeper for AI scalability

While competition is emerging, the combination of hardware performance, ecosystem lock-in, and infrastructure demand ensures that NVIDIA remains at the center of the AI revolution.

For startups, enterprises, and governments alike, one reality is clear:

The future of AI is being built on NVIDIA chips—and demand is only accelerating.